Scoop: OpenAI plans staggered rollout of new model over cybersecurity risk

Quick Insights

The Bottom Line

OpenAI plans a staggered release of a new AI model due to cybersecurity risks.

How This Affects You

The cautious release of advanced AI models aims to prevent potential widespread havoc from advanced hacking capabilities.

AI Summary

OpenAI is finalizing a model with advanced cybersecurity capabilities and plans to release it only to a small set of companies, according to a source familiar with the matter. This staggered rollout mirrors Anthropic's limited release of its new Mythos Preview model, announced on Tuesday, due to fears of advanced hacking capabilities. OpenAI previously introduced its "Trusted Access for Cyber" pilot program in February after rolling out GPT-5.3-Codex, committing $10 million in API credits to participants. This cautious approach stems from concerns among model-makers about the potential havoc their tools could cause if released widely. While Anthropic has stated Mythos Preview will not be publicly released, it remains unclear if OpenAI will eventually broaden access to its forthcoming model.

What's Being Done

OpenAI introduced a "Trusted Access for Cyber" pilot program and committed $10 million in API credits to participants.

Following this story?

Get notified when new coverage appears

This article is part of a story we're tracking:

Should this be getting more attention?

You Might Have Missed

Related stories from different sources and perspectives

AI & Warfare

AI & WarfareAnthropic's newest AI model could wreak havoc. Most in power aren't ready

<p>Anthropic has begun a tightly controlled release of <a href="https://www.axios.com/2026/04/07/anthropic-mythos-preview-cybersecurity-risks" target="_blank">Mythos</a>, the first <a href="https://www.axios.com/technology/automation-and-ai" target="_blank">AI</a> model that officials believe is capable of bringing down a Fortune 100 company, crippling swaths of the internet or penetrating vital national defense systems.</p><p><strong>Why it matters:</strong> This is the scary phase of AI — a model deemed so powerful that its full release into the wild could unleash <a href="https://www.axios.com/2026/02/24/cyberattack-risk-scenarios-experts" target="_blank">untold catastrophe</a>. So only carefully vetted companies and organizations, about 40 so far, are getting access.</p><hr><p><strong>Based on our conversations </strong>with government and private-sector officials briefed on Mythos, this <a href="https://www.axios.com/2026/03/29/claude-mythos-anthropic-cyberattack-ai-agents" tar...

Technology

TechnologyAnthropic's powerful new AI model raises concerns about high-tech risks

Anthropic announced that it has started a very limited test of its newest AI model called Mythos. It's a model deemed so powerful that the company warned it could cause widespread disruption if it were released to the public. Anthropic is giving some companies access to Mythos to test and identify vulnerabilities, a move that is raising concerns. Geoff Bennett discussed more with Gerrit De Vynck.

Technology

TechnologyAnthropic claims newest AI model, Claude Mythos, is too powerful for public release

Anthropic says its newest AI model, Claude Mythos, is too powerful and dangerous to be released to the public. Tech journalist Jacob Ward joins CBS News to discuss.

Technology

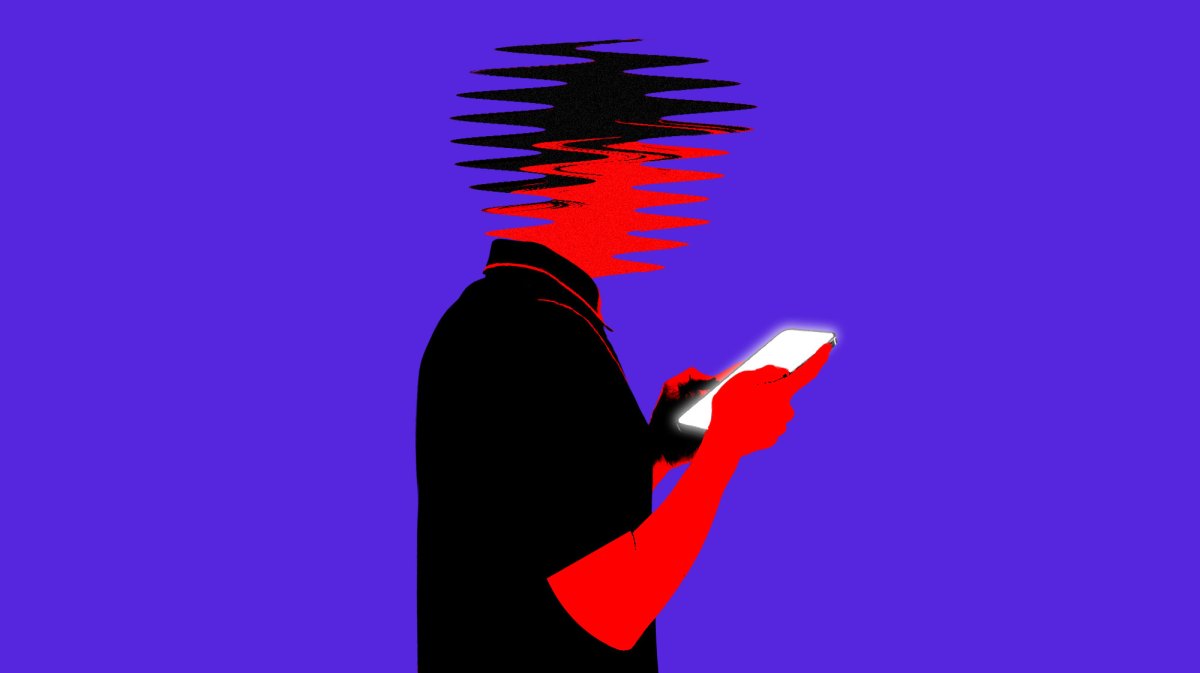

TechnologyFamily of man killed in shooting at Florida State University to sue ChatGPT and OpenAI

<p>Lawyers for Robert Morales’s family said chatbot ‘may have advised the shooter’ on how to carry out shooting</p><p>The family of a man who was killed at Florida State University last year plans to sue ChatGPT and its parent organization, OpenAI, for allegedly telling the accused gunman how to carry out the mass shooting.</p><p>Lawyers for the family of Robert Morales <a href="https://www.facebook.com/BrooksLeBoeuf/">wrote in a statement</a> they had learned the shooter was in “constant communication with ChatGPT” ahead of the shooting, and that the chatbot “may have advised the shooter how to commit these heinous crimes”.</p> <a href="https://www.theguardian.com/us-news/2026/apr/08/florida-state-university-shooting-robert-morales-family-sue-chatgpt-openai">Continue reading...</a>

AI & Warfare

AI & WarfareAnthropic touts AI cybersecurity project with Big Tech partners - Reuters

<a href="https://news.google.com/rss/articles/CBMitwFBVV95cUxOamU2ajFCQVpEZ0tQQzRSaF92ZG9UYzlkZmJDQXV4Ykc0Yy03OGVsX1U1bUNhMGxiZmVoS2xGVFZQcGRmd214a1JNZG9DcUNJUGpTZjBVbWNVNjIybGJvWWlDb3FuUU5vNHctbFF1M0JEZnZHOVAtWTFrSHdGYWZmSk80VzlpZE5icmtub0RtZDltQ3RhU3poVDlhQ0JiNlRJX3Z5WlFyVFkzTmxDaGJqU0hpYWZ2SUk?oc=5" target="_blank">Anthropic touts AI cybersecurity project with Big Tech partners</a> <font color="#6f6f6f">Reuters</font>

Government Transparency

Government Transparency“A Slap in the Face”: Trump’s DOJ Plans to Settle Predatory Lending Case Without Compensating Victims

The post “A Slap in the Face”: Trump’s DOJ Plans to Settle Predatory Lending Case Without Compensating Victims appeared first on ProPublica .

The Chilling Role of ChatGPT in Mass Shootings and Other Violence

In June 2025, a safety team at OpenAI grew alarmed. The company’s automated review system had flagged extensive activity by a ChatGPT user describing scenarios that involved gun violence. A group of staffers debated whether law enforcement should be notified, but company leaders decided the case did not meet OpenAI’s threshold of “credible and imminent” […]

Did this story change how you see things?

Stories like this only matter when people see them. Help us get verified journalism in front of more eyes.

The Verity Ledger curates verified investigative journalism from trusted sources only.

See our sourcesMost Read This Week

Over-the-counter medication abortion? These researchers say it would be safe

Supreme Court returns state-secrets privilege case to lower court

US Democratic lawmakers visit Cuba, call on Trump to "bring the rhetoric down" - Reuters

She paid into Medicare for years. Trump's immigration policy will end her coverage

Iran live updates: IRGC says Strait of Hormuz will 'never' revert to pre-war state